How to deal with another algorithm update?

We are aware that the so-called “Medic Update” is old news by now. Besides, since then we’ve already had another – the March 2019 Core Update (AKA Florida 2). In theory, it’s a done deal and we should just move on and brace ourselves for the next update – at least that’s the impression you get reading various guides and articles that seem to pop up everywhere after a major algorithm update. However, we’re not here to tell you about specific reactions, but about acting consistently. Today’s Case Study should serve as a confirmation that the best response to any update is a consistent implementation of a previously adopted approach. While it’s true that it’s good to constantly improve a previously undertaken tactic, there’s no need to constantly worry about bigger changes and live in a state of constant uncertainty.

Keep Calm and be consistent. That’s all it takes 🙂

Buckle up – it’s time for specifics! If you really just want the rundown, scroll down for the tl;dr version.

A brief synopsis of the situation

As you probably know, one of the most substantial changes in the SEO industry in 2018 was the Medic Update. The global algorithm update that took place on August hit many types of businesses, mostly those offering YMYL (your money, your life) services, i.e. those in the finance and health industries. For more information about the algorithm itself, take a look at this article.

At Neadoo, we’ve had the privilege of working with one of the biggest brands in the Lithuanian dental industry. Our story is a good indicator of what actions should be taken in order to rebuild a website after a downfall caused by Medical Update. Below is a Case Study, detailing the entire process through which we’ve managed to completely rebuild traffic after the drop, as drive growth even further.

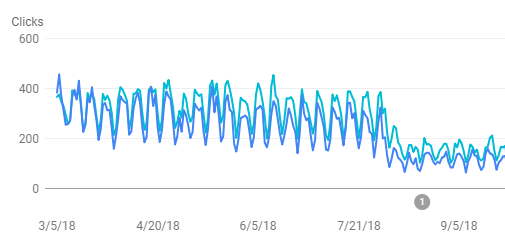

Results after the drop, or how the Medical Update affected our client’s results

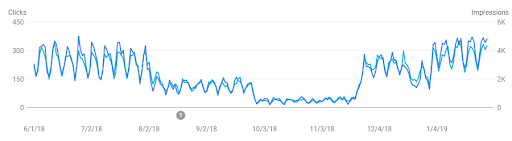

We’ve first made contact with the client in August, right after the first drops caused by the change in algorithm. We’ve reached an impasse. In the meantime, the clients has experienced another drop in traffic, this one twice as big as the last (from 200-300 clicks per day, to 60-100). While we continued our negotiations, the client tried to work with a local company – their actions were centered around finding a way out of their predicament.

We can’t say exactly what kind of actions were taken, but it’s safe to say that the results just weren’t there. This has led our client to place their trust in Neadoo Digital. They’ve decided to work with us. As a result, the client’s website has regained the top positions it once had, along with gaining an increase in traffic.

Our actions – what did we do?

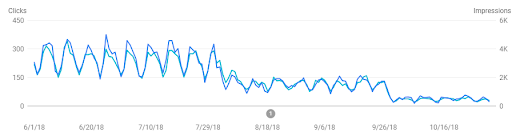

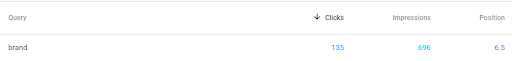

We started working on the website in November 2018, at which point organic traffic had reached a critical level. The site even managed to score a drop in brand inquiries:

The fall was substantial – from the first position in search results, the website landed at the end of the first search results page, with an average position of 6.5.

As part of our standard procedures, we start with a comprehensive on-site audit, later moving on to an off-site audit. In this situation, however, we’ve decided to reverse the order.

Off-site audit

As we’ve mentioned above, considering the drastic drops, we’ve decided to start our process with a comprehensive backlink audit. With YMYL industries (especially after the Medic Update), we pay special attention to E.A.T. (Expertise, Authoritativeness, Trustworthiness) both in on-site operations, as well as when acquiring backlinks. And so, domains leading to our client’s website should have a close connection to the medical industry, with a focus on dentistry. Taking into account the market our client operated in (Lithuania), the options are limited. Here, however, when analyzing the old link profile and planning further actions, we cared a lot about high-quality backlinks from expert articles created by the team, which also impacts the author’s authority.

When analyzing the link profile we were provided with, we’ve managed to fish out some of the links we considered harmful, which were built using old backsites and catalogs whose quality can be summed up as dubious at best. One of the pages has also been subjected to a large number of spammy links in a short amount of time. We’ve decided to report the domains we’ve managed to filter out using the disavow tool. Despite our reservations regarding the effectiveness of this tool, we’ve decided to take the opportunity it offered.

Tip: Such analysis can be performed with the help of automated tools with a large database of domains, e.g. link detox. However, if the number of linking domains isn’t large enough, it’s a good idea to perform this type of audit manually. Such an analysis would definitely work well in niche markets, such as Lithuania, where the tools mentioned above might not yield satisfactory results. You can prepare this type of audit using a simple Google worksheet. For this purpose, you might want to consider listing links from several sources. We use GSC, Ahrefs, and Majestic for this. To make it as easy as possible, export one link per domain and combine data from all tools in one sheet (additionally, duplicate domains from the various tools should be filtered in the common sheet). Ultimately, you should get a clear list of links with all the crucial data (e.g. target URL, anchor text, DR, etc.) which should be browsed in search of harmful backlinks.

The next step was competitor analysis, alongside acquiring valuable thematic domains, which we used to publish expert articles written by the specialists working at the client’s clinic.

We know, we know. What a shocker. We decided not to perform the writing in-house!

But then again, doesn’t the client know what they’re talking about better than we ever possibly could?

Tip: The Ahrefs tool can be used to analyze competitor backlinks in a simple way. For this purpose, you can also use the Link Intersect tab, which allows you to list the domains that your competitors link to.

With that, we’ve completed the first step. What now?

On-site audit

The comprehensive on-site audit we’ve implemented is comprised of many elements: from a technical analysis, through content, up to and including worthwhile tips for the website’s further development. This allows us to show the client what the current state of affairs is, as well as to draw up a plan that we’ll be implementing in all of our future works for the client. It is important that the client knows what they themselves should concentrate on.

In this case, however, the situation differed from the standard scenario, because the client had already reached the top positions for most of the top keywords in the past, and the website itself had already been partially optimized.

Technical audit

We started the technical analysis with the standard elements that have contributed to the ultimate results. First of all, we checked the pagespeed using several popular tools:

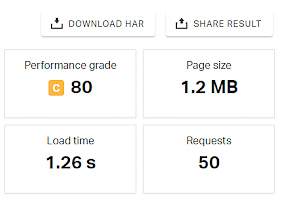

GTMetrix:

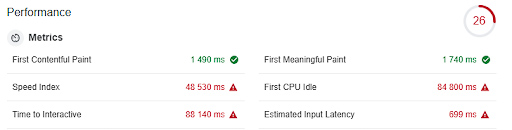

Lighthouse:

Pingdom Tools:

Despite good results, we also came up with recommendations to further improve the page loading speed, including:

- Sharing resources from a consistent URL

- Minimizing the amount of resources blocking rendering

- Additional image compression

The next step was to exclude all problems with the site’s indexing. In this case, we worked on getting rid of all low-value pages from the index, including pages for individual images that were redirected to their target location.

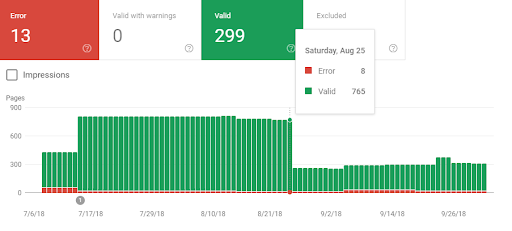

In our work, we also took into account all errors that appeared in the Google Search Console tool.

We also linked pages that were internal duplicates, all while minimizing content duplication issues.

One of the most significant problems we’ve encountered while auditing the website were the wrongly generated URLs. Because of them, canonical links and hreflangs were misleading the indexing robots.

Example:

<link rel=”canonical” href=”https://example.lt/service//”>

<link rel=”alternate” hreflang=”en-gb” href=”https://example.lt/en/en/staff/”>

Once this problem was dealt with, we’ve also corrected all internal links leading to these types of URLs.

All URLs with errors on the client’s website were redirected to the home page with a 301 redirect, resulting in what appeared to be 404 errors. We’ve dealt with this issue as well. We’ve added a dedicated 404 page, as well as a suitable redirect map for old URLs, which we’ve managed to obtain from GSC and Ahrefs, among others.

Tip: Check the lists of pages that are currently resulting in 404 errors. They can often be redirected to related landing pages, so you can regain some of the power flowing from backlinks, for example. You can obtain this kind of data from Google Search Console, though it’s a good idea to also check out Ahrefs to see if you can’t find links leading to such pages there.

Other improvements pertained to the standard elements we always take into account when optimising a website, including:

- internal linking

- website structure

- headings

- meta tags

- redirects, etc.

Due to the drop in brand queries, we’ve also focused on reinforcing the brand. Our operations focused mostly on adjustments to the microdata, properly implementing the brand in various site elements, as well as unifying it in all of the website’s tags.

During one of the final phases of implementing our work, we’ve also decided to try some unorthodox solutions. While observing the results after implementing specific parts of the optimization process, we’ve risked changing URLs in pages that have lost the most traffic. Correcting the URLs and a proper 301-redirect from the old one proved to be an outstanding choice.

Tip: Changing the URL and 301-redirecting makes up a filter of sorts, especially if the website data had been heavily linked to before. Such actions always carry some risk, but in this case this approach worked.

Content analysis

While analyzing the content in terms of E.A.T., we concentrated on how topical the landing pages were. The content contained therein had to strictly answer questions related to a given topic, while avoiding general topics that are often encountered when analyzing the content previously written for positioning.

We also divided the extensive landing pages into sections with the appropriate anchors, which allowed us to construct a clearer structure for the content on the page. In many cases, this solution allows for an additional element to display in the search results.

While analyzing the content, we also concentrated on reducing the number of keyword uses, replacing them with synonyms and variations.

Tip: Whenever analyzing a website after drastic drops, it’s a good idea to consider particular landing pages that have seen the biggest drop in traffic.

It’s also a good idea to analyze specific keywords for saturation and linkbuilding:

Further development opportunities

Each audit should not only provide guidelines regarding the problems that need fixing, but also plans for a website’s further development. In this case, we focused on building website trust and authority by expanding the blog section with new, valuable, expert articles, obtaining new reviews and expanding the existing pages with valid content. We’ve created a Content Plan.

In addition, we’ve highlighted client results in the SERPs by using a star grading system.

Tip: There are many ways to implement this type of solution. If you don’t possess any substantial programming skills, you can utilise some ready-made solutions. For example, in WordPress you can obtain an appropriately signified star rating using the WP-Postrating plugin.

You can obtain these results by using something like the Easy Table of Contents plugin.

Keep in mind that, after implementing any elements related to structural data, you should check if they’ve been correctly implemented via the Structured data testing tool

The results

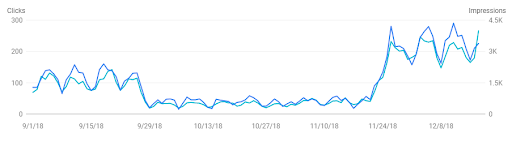

We had to wait to the end of November to see the results of our on-site and off-site operations. After less than 2 months of intense work, we’ve attained around 6 thousand clicks! To compare, allow us to remind you that, at its lowest point, the website was generating 1.2 thousand clicks per month.

We’ve managed to regain the pre-drop traffic after just 3 months. In January we reached 8 thousand clicks per month:

And we’re currently generating more growth:

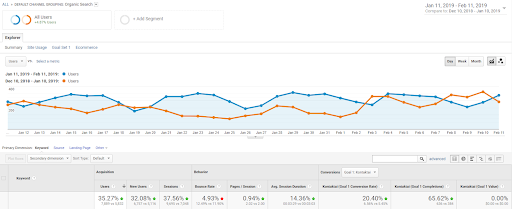

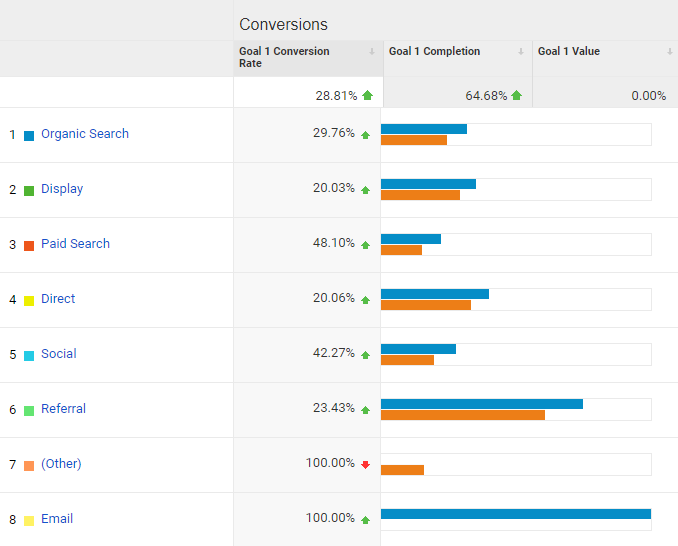

CRO and increasing conversion rates

Just getting out of the crisis left behind by the medical update was only one of the goals we’ve managed to reach. In the end, it’s the conversion rate that really matters for the client. While working on regaining the lost traffic, we’ve also conducted a comprehensive CRO analysis. By implementing it, we’ve managed to increase the website’s conversion rate by almost 29% compared to before the implementation.

Conclusion

As you can see, even within a short amount of time, with enough involvement we’ve managed to regain the website’s positions after a very large drop. It is very often the case that the one factor you’re looking for – the one that caused the drop after the algorithm update – will simply not yield the expected results. The key here was the comprehensive analysis we’ve performed. We know from experience that with such large changes in the algorithm, there are many more of these factors, and therefore it is important to make a solid analysis the starting point of your actions.

It is important that you take a closer look at the website you’re analyzing, in terms of backlinks, optimization, and content itself. Even if the site previously managed to reach top positions, this does not mean that the optimization of the page and content are up to snuff. It’s also a good idea to take a look at the SERPs to find out who is currently in the lead and, most importantly, to see if the intention behind the results hasn’t changed.

The Google Sentiment may not be a strictly ranked factor, but Google is definitely flirting with the idea of testing what the users enjoy. NLP (Natural Language Processing) and ML (Machine Learning) are behind all of this.

(Phew…. Looks like the “charge for position” days are finally gone for good :P)

Thanks for taking the time to read our study! Please feel free to ask us questions in the comment section – we’ll be taking a look there regularly to answer your questions. You can also write to us directly at: hello@neadoo.london

If you’re feeling particularly inquisitive, we’ve prepared a list of recommended tools 🙂

Plugins / the tools we talked about:

- Disavow Tool – https://www.google.com/webmasters/tools/disavow-links-main?pli=1

- Link profile analysis tool – https://www.linkresearchtools.com/seo-tools/link-audit/link-detox/

- Competitor backlink analysis – https://ahrefs.com/blog/get-competitors-backlinks/

- WordPress, star rating – https://wordpress.org/plugins/wp-postratings/

- WordPress, get anchors – https://pl.wordpress.org/plugins/easy-table-of-contents/

- Page Speed – https://gtmetrix.com, https://developers.google.com/web/tools/lighthouse/ , https://developers.google.com/speed/pagespeed/insights/ or https://tools.pingdom.com/

- Structured data testing tool – https://search.google.com/structured-data/testing-tool/u/0/